This one’s quite a fair bit off of my usual posting times for these, but this is due to the crunch time of this project and the holidays eating our organization for lunch. So here it is, Post #1 of the Starship Actium (ATKRP) Project.

The setting for this one is a fair whack different from the Stiletto project, but in a very similar fashion, still tells the story of daily life of the characters aboard a ship. The Starship Actium takes place in the Battletech universe, and follows the Actium Knights, a highly successful mercenary group that has somehow found themselves in possession of a 700 year old Dart-class warship. Also like the Stiletto project, this one uses the VRChat platform as well. This time, however, it’s more than just myself, Kaderen, and Esarai running the project. This time around, we have Haven and Sprixer running the administration, 404_SNF and TalentlessOrdinary heading up the DM department, Advocat on NPC modeling, and of course us three heading up the map development.

Now because of the crunch and the fact that this project has been hectic since we started, this post will cover a hell of a lot of points, so we’re going to be jumping around a fair bit. So without further ado, let’s get to it.

Getting Her Running

When I was brought onto the project, it was very shortly before the first Public Workshop stream that took place on the map. This stream was geared to take in all interested parties and get them setup to improv with others. Completely non-canon, but still in the same universe and mindset. Unfortunately, this whole event ran at a blazing 20 FPS in VR, which is not great on the eyes.

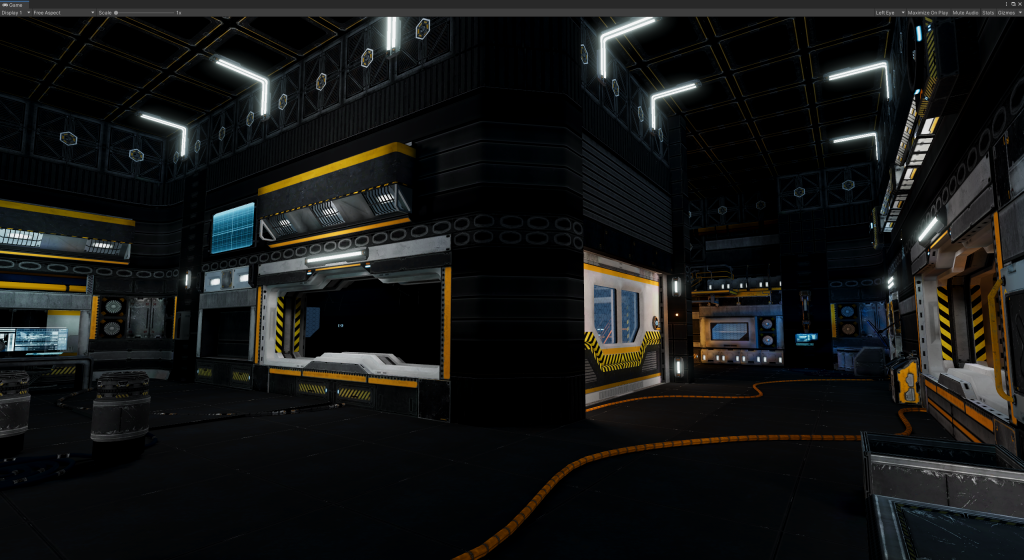

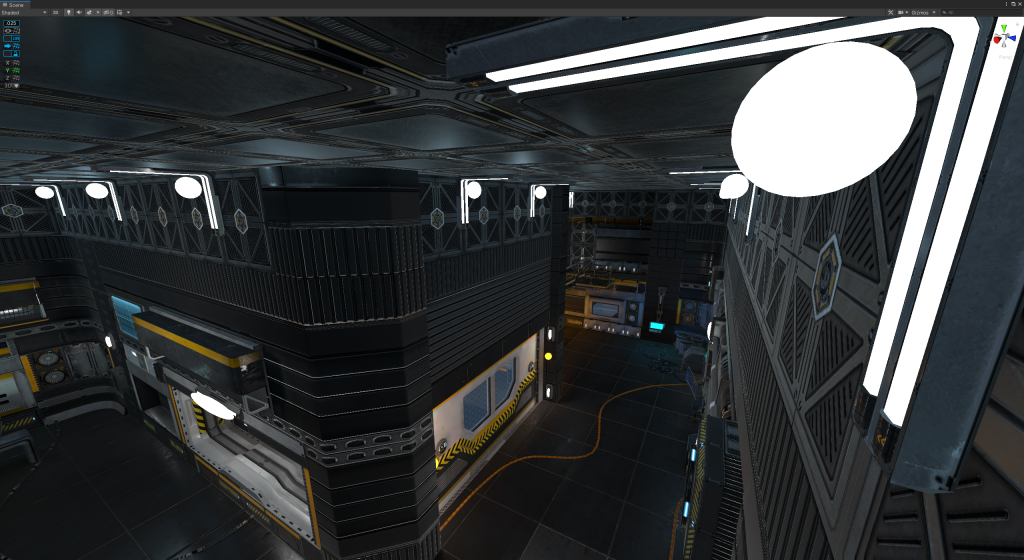

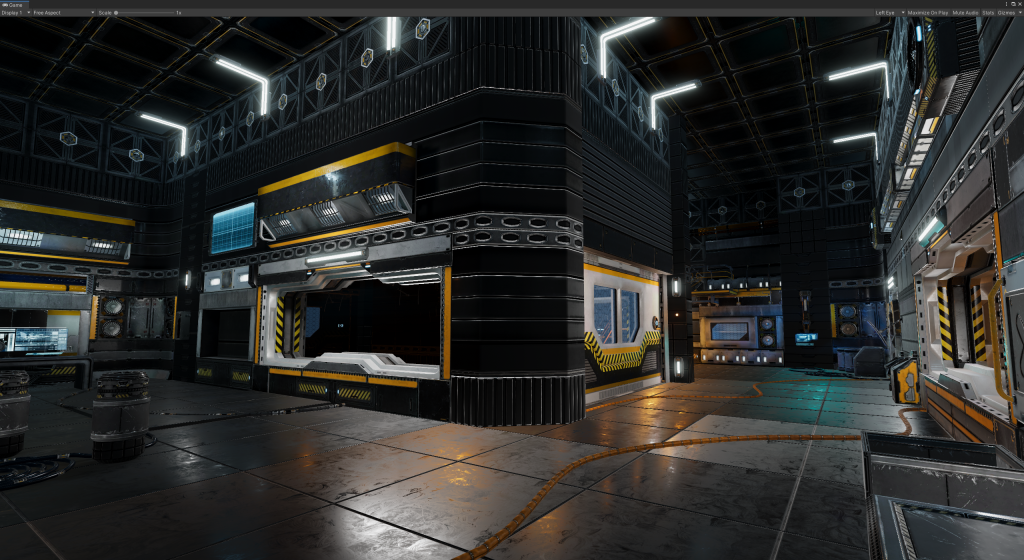

When Kaderen and I initially dug into the map, we found a hell of a lot of Realtime and Mixed lights (many of which were shadowcasters!), and no properly baked Occlusion Culling. This combination was absolutely annihilating the FPS even without anyone in the map. So, I dropped my copy of Bakery into the project and we (mostly Kaderen) got to work in converting all the lights over to Bakery format.

After deleting all the realtime lights, adding Bakery scripts, toying with Reflection Probes, baking Occlusion Culling, and adding Light Probes, we boosted the map’s FPS up to a solid 90 in almost all cases. Luckily, the very constrained nature of the Actium’s hallways makes for ideal usage of Occlusion Culling.

However, now we had a different problem. The original version of the map looked very shiny, as all the lights freaking everywhere gave the map a very heavy sheen. Now, with the baked lighting, it lost an absolute crapton of its luster. In our drive to save the frames, we lost the glitter.

When in Doubt, Fake It

After a short think, Kaderen and I posted almost simultaneously about a possible way to solve this: The Reflection Probes. In Unity, you can set objects to render on certain Layers, and the Reflection Probes are an object that can choose which Layers to render (a Layer Mask). With this, we could take every single light prefab, spawn a spheroid on it that roughly matches the shape and size of the visible light, give it a heavily emissive material (around 50x Emission Strength), and set it all onto a layer that should only render on Reflection Probes. The project has them all labeled “Fake Light”, but I can’t help but call them Glowy M&Ms due to their shape.

What this does is boost the absolute crap out of the “glow” of the lights in the reflections, blooming them out and making them much more apparent in the reflections on metallic objects than the Reflection Probe would normally render. If you’ve toyed at all with Unity, you may be asking, “Why not just boost the Reflection Probe Intensity?” The answer to this is that boosting the intensity is like cranking your brightness settings; all it does is wash the entire thing out and lose all the contrast. This method prevents that by only making the light sources more intense.

The Reactor Room

For my first main project for the ATKRP map, I took notice of a certain very tall and very empty room that we had been dubbing the “Star Wars Room”, due to it’s giant pit and narrow catwalks. In this, I was inspired to create my own take of a giant freakin’ fusion reactor to slot right on in there.

The very first thing I did was to make a new Empty GameObject, and toss it at the extents of the room in order to get its dimensions. Turns out, the entire pit is exactly 50 meters tall from floor to roof, for a fun bit of trivia. So with those numbers in hand, I took to Blender and started with the primitive I hate the most: the humble Cylinder. I made it 50 meters tall and some amount across that I can’t quite remember at the moment, and made heavy use of the Loop Cut and Extrude functions in order to get the base shape.

While I was working on the thing, I noticed that I was basically keeping some form of a 4-fold symmetry across the model, mostly by accident. Because of this, the UV Mapping process became really easy. Essentially, I cut the model into 4ths, vertically, and from there all I had to do was UV Map about 25% of the model and copy that main section 4 times in a circle to finish the hull. This allowed for a lot more texture space for details without having a huge 8K map for the 50 meter tall glorified tube that was to become the reactor.

At some point, the Reactor Glow also got turned from Orange to Pink, to match the actual coloration of the D-T Fusion process’s physical color.

Yet More Modeling

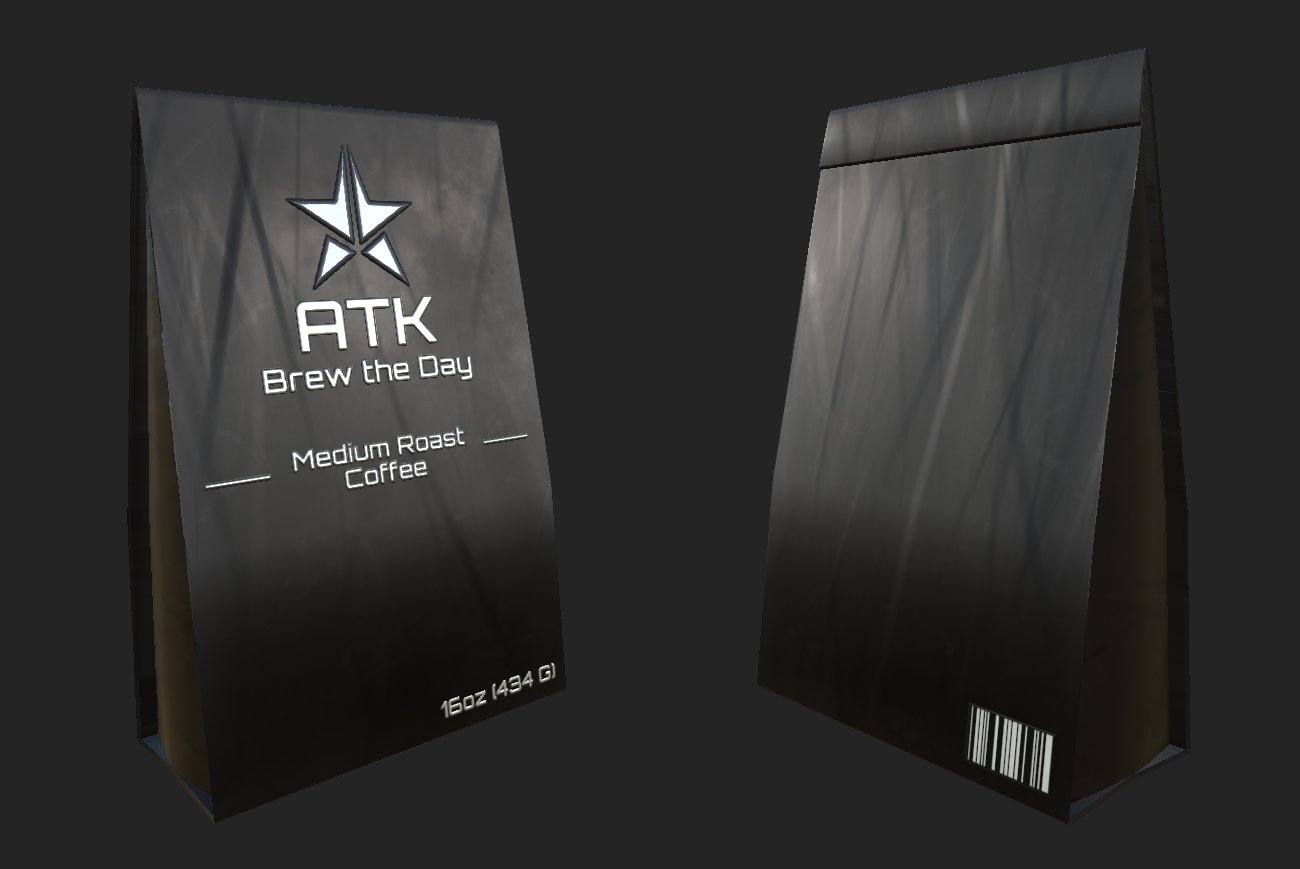

This section is going to be a lightning round, as this article is getting quite long and we still have a bunch to cover. So to start with, coffee:

I made this model during a stream in which Haven, Sprixer, and 404 played Stardew Valley in-character, and “Major Darling” professed his love for Coffee and wanted a personal brand. So, my pun-riddled brain hopped into Blender/Substance and whipped this up in less than an hour.

What good is a gritty low-tech sci-fi story without shitty vending machine food? This pack was made mostly just with tapered cuboids and liberal usage of Substance Painter’s Projection mapping to throw JPGs onto the textures.

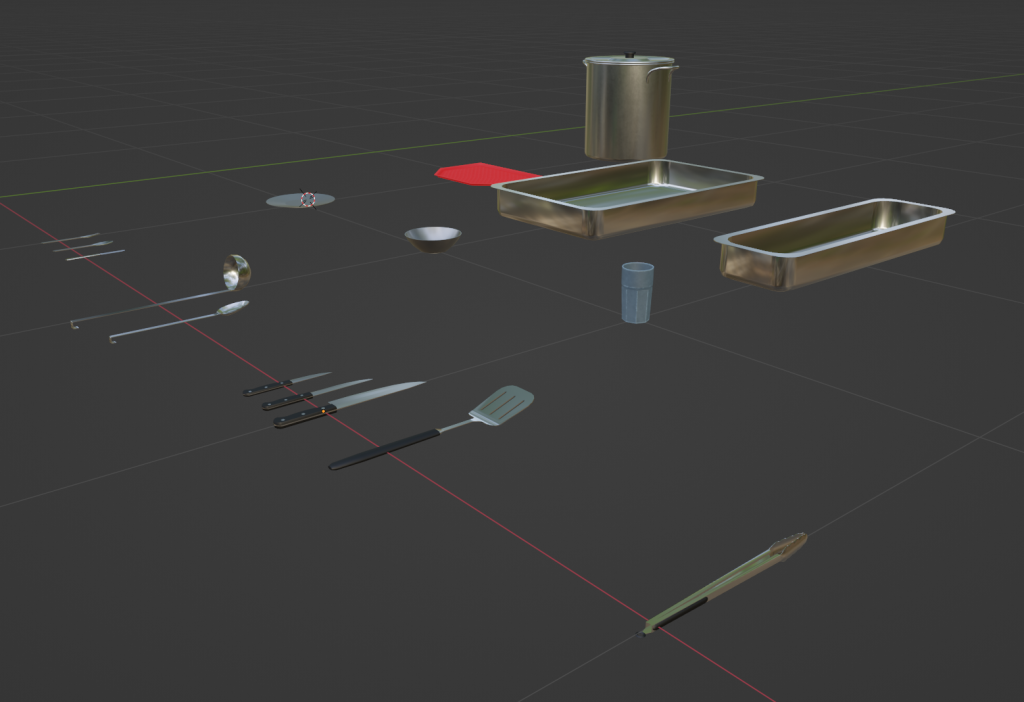

No fancy Cycles render for this one just yet, as it’s still a work in progress (and probably will be for some time because I am a slave to scope creep). We needed a bunch of props to fill out the Mess Hall area, so I cranked out a bunch of mid-grade models. I realized that I could save a hell of a lot of texture space by just using simple PBR channel settings instead of actual textures on most of the pack, so the only things with actual texture mapping are the Pot, Tray, Plate, Bowl, and Cup. The final product for this is supposed to have the capability to throw foods into the dishes, but that’s a ways off due to both scope creep (I have too many foods planned out) and the fact that VRC’s networking makes syncing which foods are “active”, and what objects are currently on the Tray a right bear to deal with.

Also the tongs clack, which is the best part, and I brought my tongs in from the kitchen and clacked them into my microphone to get the sound. That being said, I am really keen on how well the Chef’s Knife came out, considering it’s just two PBR flats instead of actual texture work.

You Daft Git(lab)

If you’ve tracked the SRP DevLog at all, you’ll know that I’ve had a storied history with Unity’s Collab solution. If you haven’t, I can quickly sum it up with, “Collab bad, Collab lose files and no work right”. Haven was, unfortunately, unconvinced when the project started, and we ended back in the hell pit of Collab and Trello for a short while.

I say “short while” because, thankfully (and regrettably), Haven finally came to learn how absolutely dogshit Collab actually is, and finally agreed that we need to move to something more serious. One Workshop episode later, and I’ve ported us all over to my personal Gitlab server. Unfortunately, this road was not without its speed bumps.

To begin with, only the Asset Dev team has had any real experience with Gitlab prior to this project. This came with a plethora of hiccups and training pains and other such issues as we tried to get everyone up to speed with the very helpful (if rather opaque) toolkit that Gitlab provides to us. It was a hell of a lot of churn to have those not of the Development sphere bounce from Discord to Trello and finally to Gitlab, but I think we got there in the end.

Our issues are also continuously compounded by the fact that the way Unity is designed is not very Git-friendly. The only reason why Collab doesn’t constantly shit the bed is because it forces you to pull the latest changes BEFORE you push your own. Git, however, allows you to pull other people’s changes out of order with your own because of how the Commit History works. This can lead to lots of weird conflicts and lost data if one isn’t careful. We’ll eventually get the kinks ironed out, but until then, the growing pains are definitely real.

For the next few sections until closing, I’m going to toss the log on over to the other members of the Dev Team, starting with Kaderen.

Pickup Management

Kaderen here, back again and taking over for the next two segments or so. Firstly, lets talk about pickups, their presence in VRChat and roleplays, and the challenges related to using them in Udon/SDK3. (SDK refers to VRC’s content development kit, and the number is the major version iteration, Udon is VRC’s proprietary visual scripting language).

As long as roleplays significant enough to have their own world have been around, they have usually had “pickups”, which is a simple term that refers to any object that’s part of the world that the players can pick up and do stuff with. These can be all kinds of things; from cups, to frisbees, to firearms. In the context of roleplay, this can help a lot with letting players become immersed with the world. Something like, “You see those mountains? You can go there,” but instead, “You see that cup? You can pick it up.” Which in VR, can be a lot more important than one might expect.

The Difference Between Pickups in SDK2 and 3

Or, more precisely, the act of spawning them at runtime. Simply having a pickup exist in the world is exactly the same between SDK2 and SDK3, but the major difference comes in that you cannot spawn objects in a networked way in SDK3. Clients being in a desynchronized state during roleplay is a situation we absolutely want to avoid, as there are few things more immersion-breaking. So that immediately takes that option off the table. As a replacement, VRC offers an object pooling solution, but it comes with a pretty major caveat: it only handles ensuring that the state of objects that it contains are synchronized correctly across clients (and more recently, hasn’t even done this correctly). This means that in order to actually use it, we have to write our own scripts that work around it. Of course, I wouldn’t be speaking about it currently if that wasn’t what I did.

Ultimately, this can be done relatively simply, but I went ahead and did some extra stuff to make things easier to use. It’s easy to make some scripts that can call the “TryToSpawn()” and “Return()” functions, but mine does a bit more than that. Notable features include:

- Keeping track of what pickups a specific user has spawned, so they can return all of only their pickups.

- Being able to toggle the “AutoHold” setting across all of the pickups in the world, which allows the user to choose whether items stick to their hands without holding down the trigger or not.

- Full integration with the Admin Menu, including displays of how many pickups are currently spawned, the ability to spawn a pickup directly to your hand, and managing pickups entirely from the menu.

Oh, and as a fun little tidbit (which, if you follow this project closely at all, you’ll already know about), a number of our pickups have a neat little script that allows for them to both float when dropped, and be thrown at the same time.

Pistols -or- Things That Go Bang

After chasing down a number of odd bugs, and despite the presence of things meant to mitigate it, the workshops for the map had some audio issues rear their ugly heads. After looking into it for a little while, the decision was made that the culprit was most likely avatar audio, and action was taken to limit the number of audio sources allowed on the avatars present during the workshops (and eventually, the roleplay itself). However, this comes with its own issue: the map will have to take up some of the things that would normally be relegated to avatars. At least for me, though, I saw this as an opportunity.

Guns are probably the number one thing I have always pursued in VRC. All the way from my very first avatar with a pistol and a firing animation, things escalated from there. So, I created a world-based firearm, with fully fleshed out animations, ammo tracking, and ballistics. Ultimately, this was a cathartic thing for me to work on, because it’s probably the first thing in both this and the SRP project where I haven’t had to work around VRC’s shortcomings at every turn. Though instead, I did have to spend a day cleaning up the model taken off of Sketchfab, which had some of the most atrocious vertex normals I have had to deal with, period. In the end, though, it came out looking quite sharp.

For how the pistol’s scripting actually works, although a simplification, it does some conditional logic based off of different interact methods that calls a mix of animations playing, prefabs spawning, and sounds playing. Even the ballistics systems (which currently lack penetration) weren’t that difficult to figure out.

Esarai’s Pickups

Esarai here. My comrades have already explained the importance of pickup objects in the context of a VR roleplay, but it bears reiterating that having an interactable item that is more than just set dressing greatly expands the scenarios and immersion achievable within the VR space. Roleplay is about improvisation and adaptation, and the details of a setting influence the actions a player will view as believable or even possible given their surroundings. It’s a lot easier to play out the act of cooking a meal for 30 people in a fully-stocked kitchen than it is to stand in an empty room and mime it. So as devs we want to add as much detail as we can to give our players cues and tools to work with.

Just having a static visual representation is not enough for us as that ties the roleplay to a specific environment and there are actions and activities that need to persist across locations. Suppose a player is a chef in a restaurant; they can pantomime cooking and serving an imaginary meal to their players in the dining hall, but a player who enters the hall late will have no idea that food has already been served, or that the food is of exquisite quality. Pickups untether roleplay from a given environment and facilitate quick and intuitive communication of the state of play. With the scripting elements exposed to us by Udon, we can take this even further by making the pickups have triggerable effects that represent when an action is being taken without the need to narrate it. The more detailed and believable the props are, the better the experience for everyone.

This incentive toward detail is constrained by our chosen medium. VR itself is more graphically intensive than other game genres due to the need to render everything in higher resolution, at higher framerates, twice over (once for each eye). Many of our players are simultaneously attempting to encode and stream video while in our world, so in order for their streams to be as high quality as possible and their in-game experiences to be smooth and uninterrupted, we need to guarantee the world is as performant as possible. And by performant I mean frames. We need all frames we can get.

In VRChat, worlds must be downloaded by every player who joins and connection speeds across players are not consistent. To ensure our roleplay is as open and inclusive as possible, we strive to minimize file size for our virtual spaceship as much as possible so that players can enter the world as quickly as possible. This improves the experience for our players, but also serves to optimize a game session logistically by minimizing downtime spent waiting for everyone to load in.

The aforementioned constraints guide the creation of our pickups: they must be sufficiently detailed to be believable (while not so detailed as to degrade rendering performance), re-use as many assets as possible to keep the world file size down, and have interactable behaviours to expand the lexicon of actions available to our players.

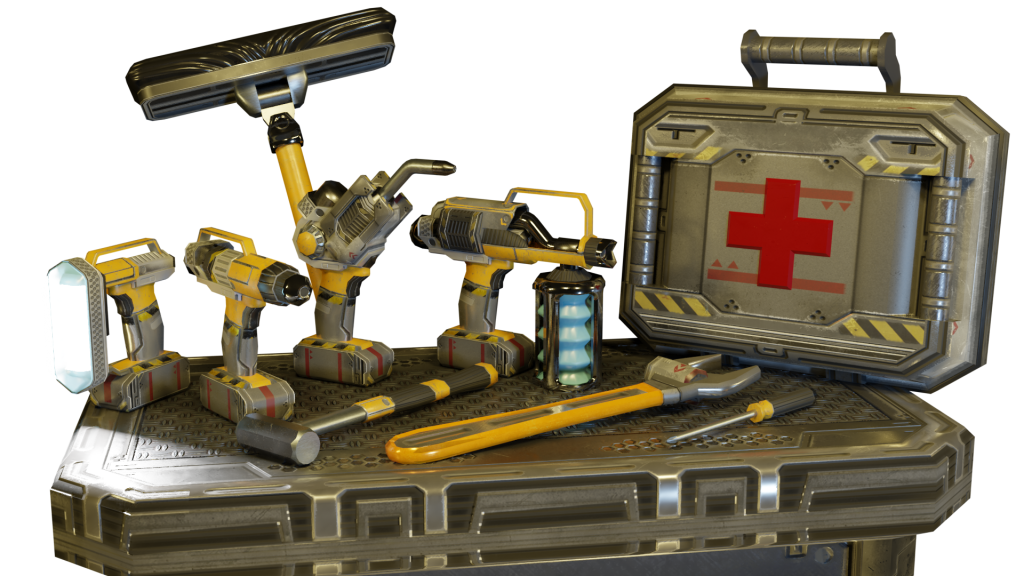

To start, we’ve created an arsenal of tools by re-using the texture atlases and trim sheets available from the world-building pack we are using:

And finally, a perfect example of the impact these can have on player agency:

The Big Day, Denied

And now, a short story by WaffleSlapper:

The day finally arrives for the premiere of Episode 1. We’re three Workshop/Non-Canon episodes in and the dev team is working off a combined total of about 5 hours of sleep due to everyone being in full “GET IT DONE” mode for the prior 3 weeks. I personally had gone through the process of setting everything back up for streaming, something I hadn’t done for about 2 or 3 years at that point. I finally hit End of Day at my work, hurriedly dump everything in my laptop bag, and rush my ass home (at the speed limit, I swear) for the pre-episode test.

I had just used my phone and Chrome Remote Desktop a couple hours prior to remote into my home computer, pull the latest changes to the map, rebuild the lighting, and get everything published to VRC’s servers. This was my normal afternoon break process for Build Days. The map was ready to go by the time I got home, and so I popped into VR and invited Esarai and Kaderen into the map to make sure everything was working as intended. Turns out, it wasn’t.

We three pile into the map and rush around the place to test all the things and quickly find out that, for some reason, the Prefab bindings on all the Kitchen item spawners and the Pistols all got wiped during one of the Git merges on the scene. No idea how, but back into Unity I went. I fix all those issues and begin to rebuild the map, and shortly through that process I’m wandering through the new Medical Ward area and immediately proceed to fall through the floor. GOODIE! I wail on the Cancel button for the build process to no avail, show time is in 10 minutes, aw crap. Eventually, I just ended up Force Quitting the Unity process and rebooting, as it would be faster to do that instead of waiting for whatever it was doing at the time.

Back into the editor, I open up the Ward files to find… yet more Prefab Overrides to set the Vent model to the wrong goddamn layer (PlayerLocal is my bane). Fixed that, restarted the build process, T-Minus 5 minutes. Stress.

In the middle of the THIRD build of the day, Esarai is talking to me in VRC and says, “I have an idea” and brings his in-game hands down onto a barrel model to emphasize a point. What that point was, I probably will never know, as he completely froze up in that very instant. I pop into the Discord call to regale everyone with that funny little anecdote and, in the middle of that, my connection to VRChat times out. Great. While I’m complaining about that in the Discord call, Discord drops out. RUH ROH!

For some reason, half the people in Discord suddenly dropped out at the exact same time as VRC detonating in my face. However, my upload completes, Discord comes back, and we test the map changes and find no further issues. So we give the green light, and the Admin team opens the floodgates on the 50+ people waiting to join the show. But, well, not all of them can get in; they keep getting dropped onto their own instance of the map with nobody else in sight. VRC just refuses to play ball. For the next 2 hours or so, the team attempted to salvage the show, but eventually gave up after multiple session restarts and map switches failed to get everyone in, and we made Episode 1 into Workshop 4.

And away we go…

My apologies for taking so long to get this out the door. We had planned to get this post going before the second attempt at Episode 1, but the Thanksgiving holidays put the kibosh on that, and now we’re about to head into Episode 3 next Wednesday.

In the future, we plan on releasing more of these posts, at a GREATLY reduced length, with hopefully fewer delays as we head into 2022. However, we already have a great swath of things that have changed since writing this post initially, so January’s post will likely be quite long as well.

If you wish to support this project, our team has a Patreon setup at which you can read these Dev Logs a week early, before I drop them on here. In addition to all this, this project has gotten me back into streaming as well, and I’ve been recording my pov on Twitch. I hope to see you all there!